- Inceptly

- Posts

- Our agency sucked. Here’s what we did about it.

Our agency sucked. Here’s what we did about it.

Here’s the shift that changed everything for us.

Our agency sucked. Not in the obvious way, though. We had great editors, copywriters, media buyers… |  Author: |

For years, we did what most agencies still do.

We treated ads like custom projects.

One script that gives us 10-15 ad iterations, almost exclusively centered around

one “perfect” idea.

We always knew that the ‘perfect idea’ was just the best hypothesis that we could come up with. We were open about it. But we just didn't act like it was that - just a hypothesis.

Weeks of revisions.

Polishing.

Debating tiny details.

Then we launched it and hoped it worked.

Sometimes it did.

Most of the time it didn’t.

And when it didn’t… we had almost nothing to learn from.

Because we weren’t actually testing a system. And the scale at which we were testing wasn't massive.

Truth be told, Google did limit us in terms of how much we could've tested for all of our clients that weren't willing to spend really big bucks on it.

Meanwhile, the platforms were changing.

And this time, luckily for our clients and us, the change was in our favor.

Demand Gen fundamentally changed how creative testing works.

Behind the scenes, the platforms began relying heavily on bandit algorithms, systems that can predict the potential success of an ad after only about 1,000 impressions.

If you think about that for a second…

1,000 impressions costs between $2 and $8 in most markets.

That means you can test a singular creative idea for the price of a cup of coffee.

For years, the industry (us included) repeated the same advice:

“Every ad needs equal spend.”

“You must A/B test everything evenly.”

But Google stopped working that way.

For me personally, that realization was huge.

I joined Inceptly six years ago as a media buyer.

And honestly, I hated the constraints.

Media buying always felt like optimizing inside a cage.

You could tweak budgets.

Adjust targeting.

Push bids up or down.

But the real lever, the thing that actually moves performance, was always creative.

Eventually, I switched into the creative team because I wanted to work on that lever directly.

And that’s when something interesting started happening internally.

Our media buying team, especially Bobo and Vesna, kept coming to us with the same observation.

“Now that Demand Gen works this way… we can test a lot more.”

Bobo, who besides being a world-class media buyer, also happens to be a physics professor, started explaining how the platform algorithms behave.

Not in marketing terms.

In mathematical terms.

He described them exactly like multi-armed bandit problems, systems designed to explore multiple options quickly and then exploit the ones that show early statistical advantage.

Once we understood that, the implication was obvious.

The constraint wasn't the platform anymore.

It was our creative process that wasn’t built for the way Google campaigns operate in 2026 and beyond.

As soon as you remove one bottleneck, you start noticing the next one.

This time, it wasn't ad spend anymore.

It was how many ads we could write and produce.

Because yes, suddenly we could test 200+ creative variations per week.

But our process definitely couldn’t produce 200 ad iterations per week for every client unless we massively scaled up our production efforts.

So we rebuilt everything from scratch.

Which meant a lot of long calls.

Some exciting.

Some… uncomfortable.

Because the real question wasn’t just how we create ads.

It was how we think about them.

Are ads individual works of art that we polish and iterate with a few hook variations?

Or is there a more systematic way to do it?

Eventually, we landed on an answer.

And that’s when the Modular Creative System was born.

It’s extremely simple.

Every ad we produce now is built from three interchangeable components:

It's extremely simple - every ad we produce now is built from three interchangeable components:

Intro

The pattern interrupt that stops the scroll.Bridge

The belief shift that makes the message believable.Core

The decision moment that converts attention into action.

Each part can be swapped, tested, and recombined.

For example: 10 intros × 5 bridges × 2 cores

That’s 100 meaningful ad iterations from the same concept.

This is what we now call our Modular Creative System, which allowed us to create 100-200 iterations in the time that we'd need to create 10. And it was just about organizing it.

But the system itself isn’t the breakthrough.

Lots of teams talk about modular ads now.

The real breakthrough is how we operate the system.

Testing 200 ads a week or month means nothing if you can’t answer:

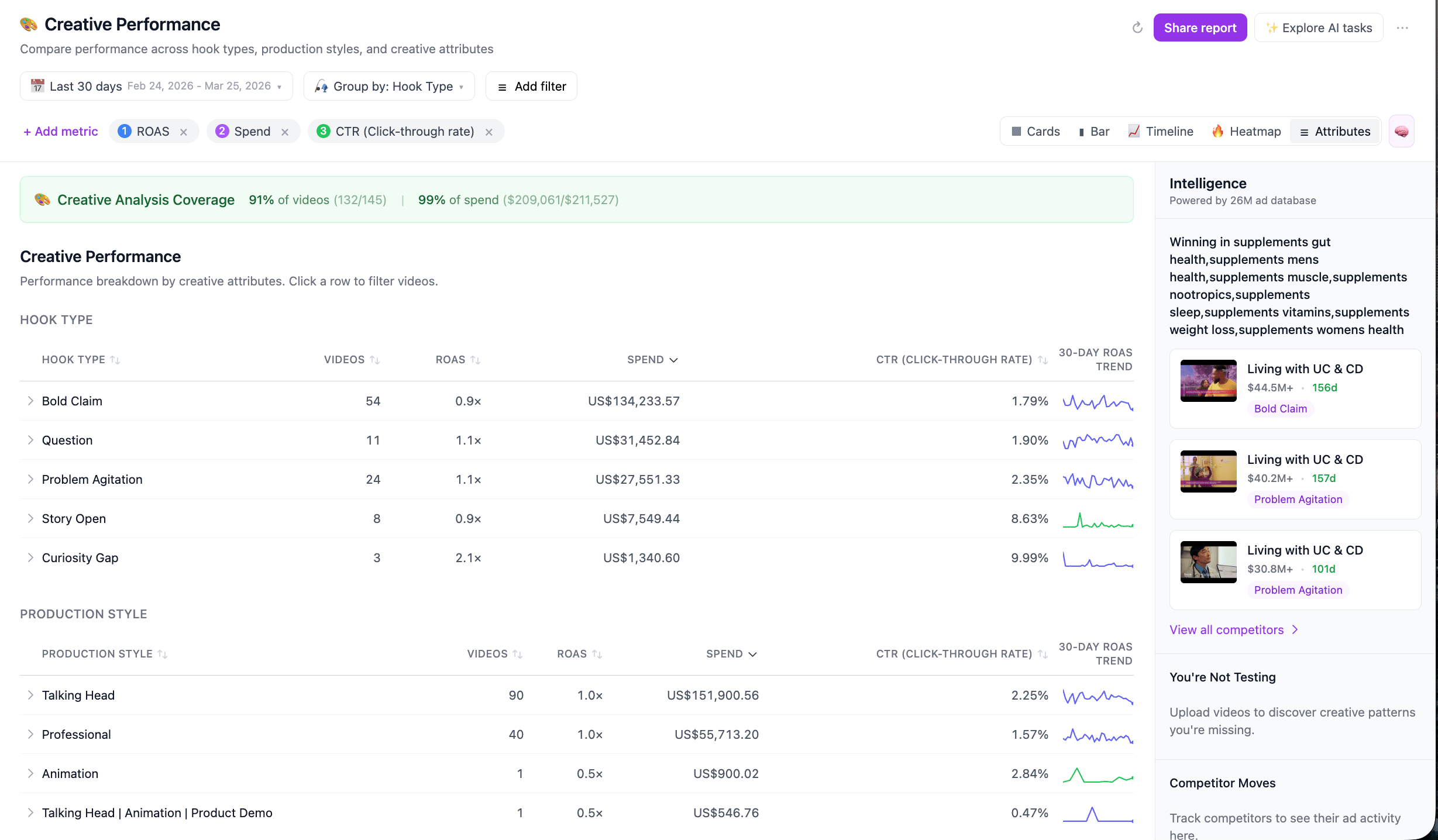

What actually worked

What belief changed

What pattern interrupt drove the click

What failed and why

And this might sound strange…

But I love the ads that fail.

Honestly, almost as much as the ones that win.

Because every losing ad can give us a hint where the system broke.

Context sometimes makes it crystal clear, sometimes it doesn't.

But we're closer to it than ever before.

Was it the intro?

The belief transition?

The core?

Failure is on its way to finally become a useful piece of information.

We also didn’t stop at theory.

Once the Modular Creative System started working, we realized something else:

the system only works if you can operate it at scale.

So we built our own internal workspace around it.

Inside it, we can instantly see:

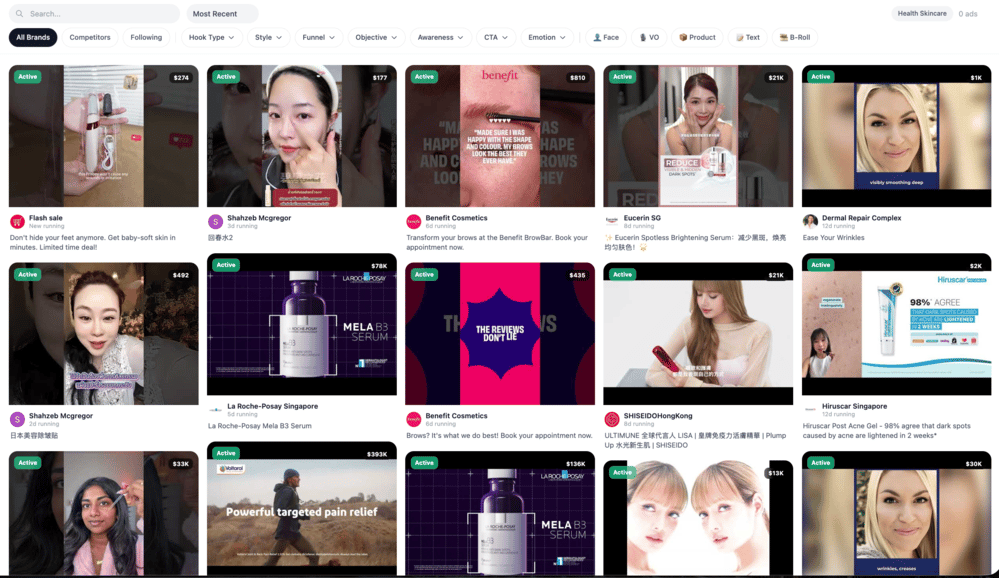

all relevant competitors and how much they are spending on their ads

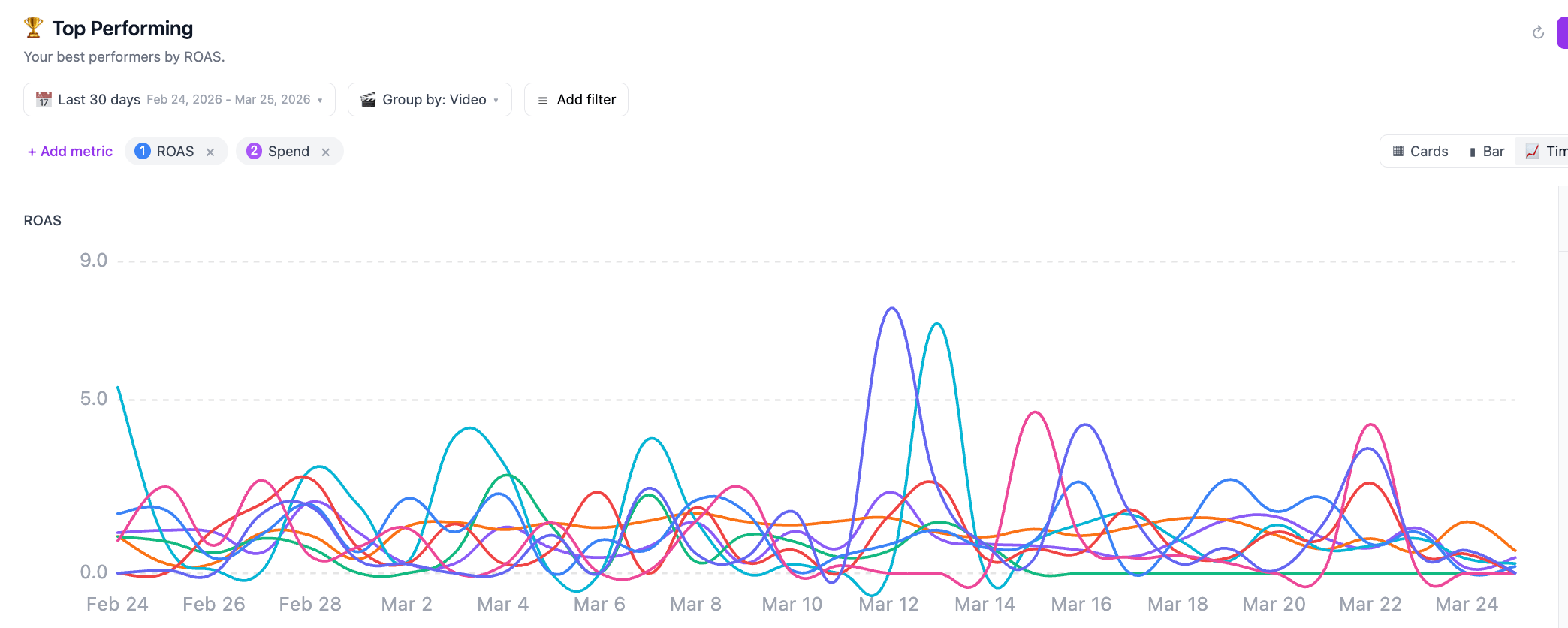

which creatives are scaling and which ones are dying

how long do ads survive before the algorithm kills them

what angles competitors are repeating across campaigns

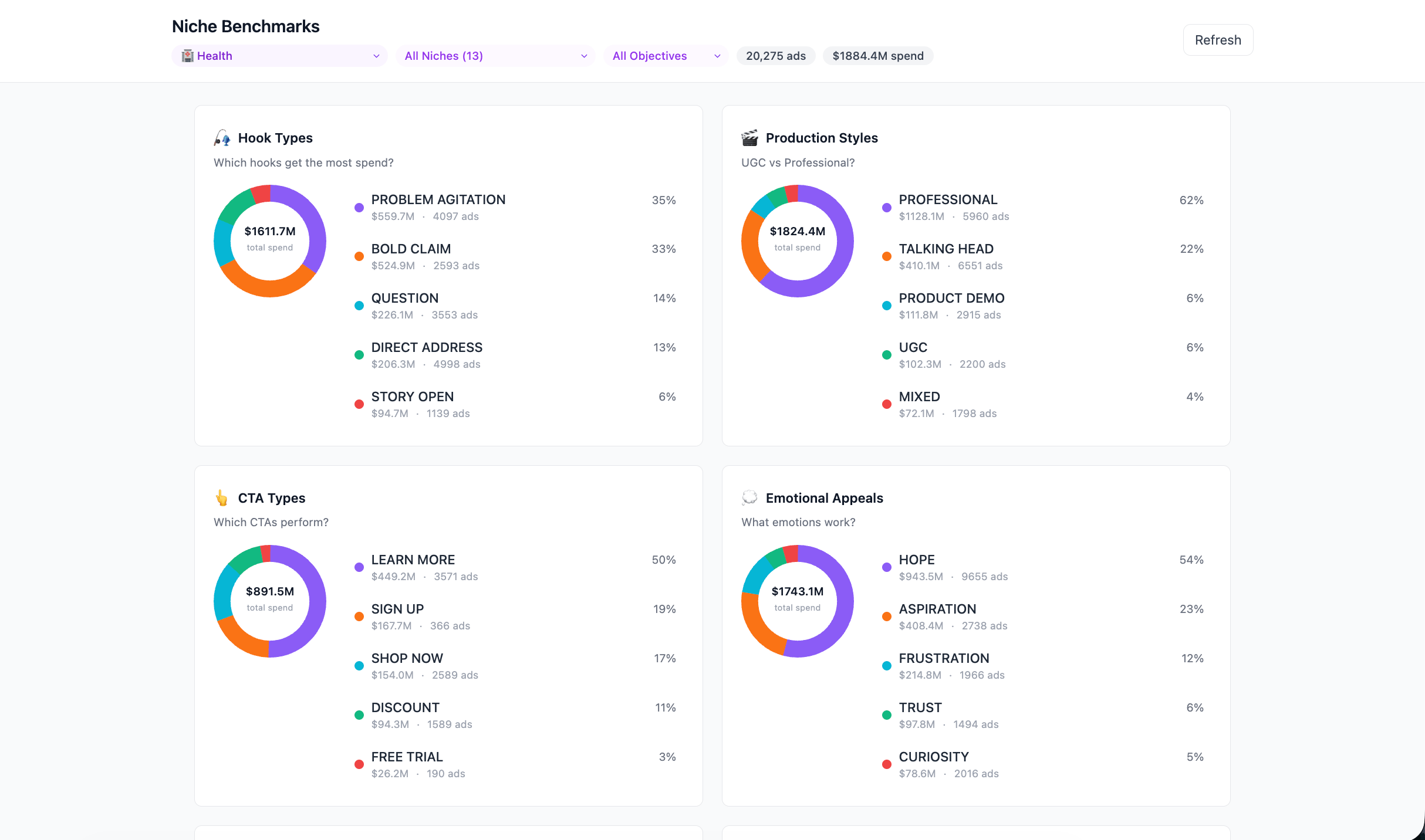

We integrated the industry data directly into our workspace, so our team gets a constantly updating feed of competitor activity.

That means when a new campaign launches, we don’t start from zero.

We already know:

what the category is testing

what angles are saturated

what patterns are scaling

From there, the same workspace lets us:

generate modular script variations

track every intro/bridge/core combination we test

see exactly which belief shifts are winning or losing

and produce new creative iterations directly inside the system.

So instead of guessing, our team now runs creative testing like a structured research process.

And that’s what actually allows us to ship 200+ meaningful ad variations per week while still knowing exactly what worked, what didn’t, and why.

This is the biggest shift our creative team has made since the agency started.

And it completely changed how we scale our clients’ campaigns.

If you want to see how the system works on a real campaign — including the workspace — reply “SYSTEM” to learn more about it.

Alex Simic

Creative Lead

Inceptly