- Inceptly

- Posts

- The 1 AI tool a good video editor can't live without in 2026🛠

The 1 AI tool a good video editor can't live without in 2026🛠

Your creative team isn't slow because they're bad at their jobs. They're slow because they're still operating like it's 2023 — rebuilding full assets every time a hook dies, sourcing B-roll for every new concept, waiting on shoot days to test a single angle change. There's one platform that fixes most of this. And most teams still haven't properly committed to it. |  Author: |

We go through a lot of creative here. Across the accounts we run, we're testing multiple hook variants per concept per week — VSLs, UGCs, 15-second cuts, 60-second brand plays. The teams that keep pace with that volume aren't necessarily bigger. They just use their tools differently.

If we had to point to one platform that changed how fast a lean creative team can actually move in 2026, it's Higgsfield. Not because it does one thing well. Because it does the whole production stack — video generation, character consistency, motion control, lipsync, product placement, UGC creation, visual effects — inside one interface, without the tool-switching tax that kills momentum.

Here's how we actually use it, and where it earns its place in a real ad creative workflow.

Want to brainstorm with us on new ways to scale your business with YouTube Ads (and other performance video platforms)?

Join us for a free YouTube ad brainstorming session here:

Kling motion control — for hook testing without a shoot day

This is where most teams start, and it's still the feature with the clearest ROI for performance creative.

Kling 3.0's motion control lets you spec camera path, shot angle, and subject movement before you generate a single frame. Push-in on a product, orbital shot, top-down reveal — you define the movement, it executes it consistently across as many variants as you need. That means when you're testing 10 different hooks against the same product shot, you're not dealing with 10 different visual languages. Same controlled push-in. 10 different opening lines. Clean, comparable data.

What this kills: the "we need a new shoot" conversation. You can generate a controlled, professional-looking product shot with specified motion in the time it used to take to brief a videographer.

The older complaint about AI video — that outputs were too visually inconsistent to use in real ads — is mostly solved here. Motion control is what fixes consistency. Without it, every generated clip looks like it came from a different director. With it, you can build a batch of hook variants that actually look like they belong together.

One honest limitation: it's still not where you want to be for realistic human dialogue. Faces in sustained motion can drift. Use it for product shots, B-roll, environment builds, abstract transitions — not as a direct replacement for a UGC creator on camera.

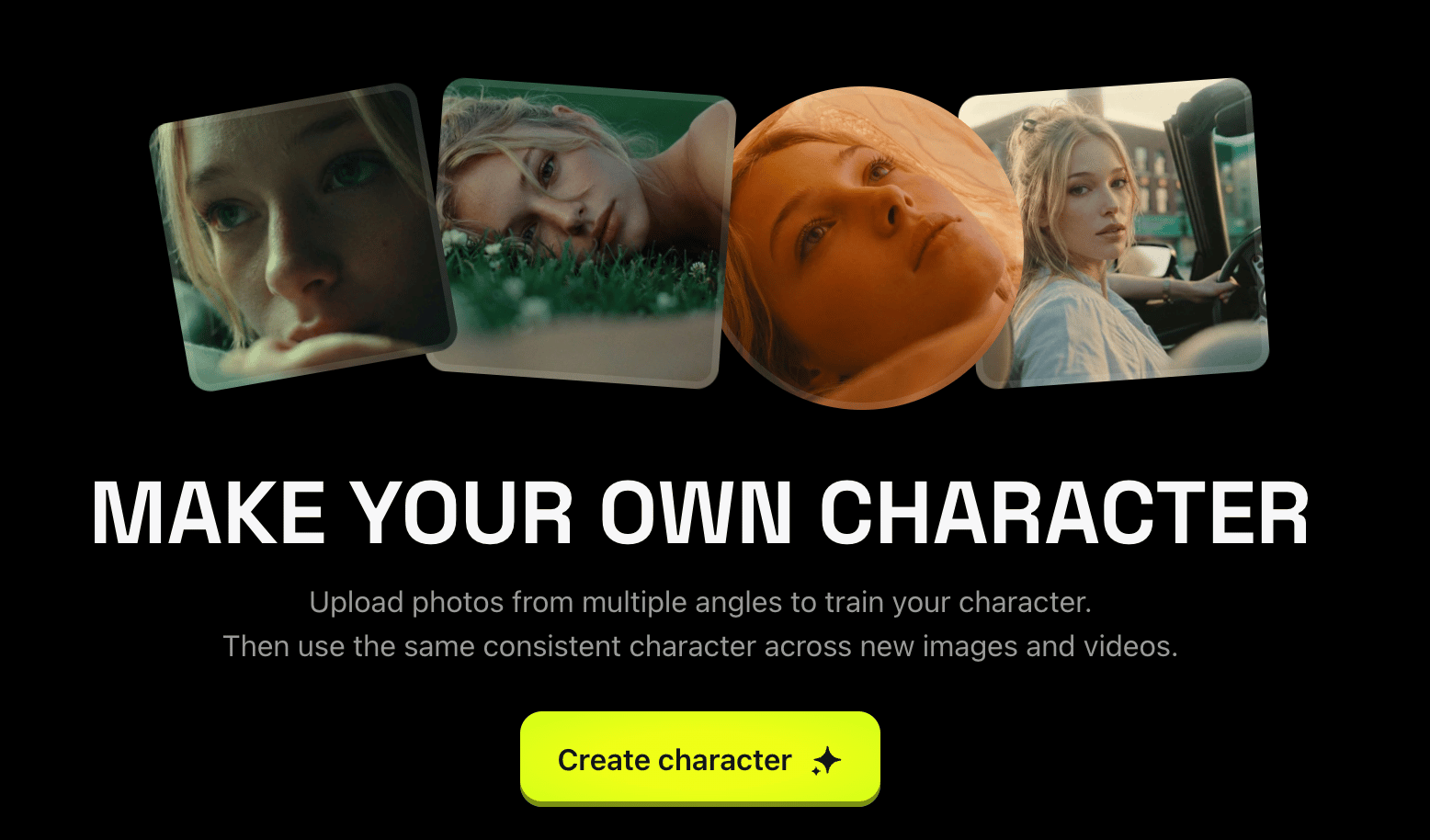

UGC Factory + Soul ID — consistent characters without consistent talent

This one matters a lot for brands scaling YouTube, and it's genuinely underused.

Soul ID lets you build a character — face, style, look — once, and then place that character in any scene, angle, or visual context you generate. Combined with Higgsfield's UGC Factory, you can produce UGC-style creative at batch volume without sourcing new talent, scheduling shoots, or negotiating usage rights every time.

The practical application: you have a winning UGC performer. Their hook works. But you need 8 hook variants and a different environment for each one — gym, kitchen, outdoor, product close-up. With Soul ID, you generate all 8 without touching the talent again. The character is consistent. The context changes. The test batch ships this week, not in three.

This is also where Higgsfield's commercial faces app comes in — pre-built characters that are cleared for ad use. If you don't have a Soul ID character yet, this is the fastest path to UGC-style creative that doesn't require sourcing talent or signing model releases.

There's real nuance here around authenticity. Buyers of high-consideration products — anything health, finance, supplement — are increasingly sharp about spotting AI-generated faces. Know your offer and your audience. For some categories, this is a total non-issue. For others, it needs to be blended with real talent rather than used as a full replacement.

Lipsync Studio + dubbing — the one that actually extends reach without rebuilding anything

Most people know Higgsfield has a lipsync tool. Fewer people are using the dubbing capability properly, and it's one of the most straightforward ways to expand the geographic reach of a winning creative without touching the production.

Here's how it works in practice: you have a VSL that's converting on English-language YouTube. The hook works, the offer works, the pacing works. You want to test it in a Spanish-speaking market or run it against a different country's audience. Traditionally, that means a new record, a new edit, a new export. At a minimum, a few days. More likely a week-plus if you're coordinating across teams.

With Higgsfield's dubbing, you feed in the original video. It generates a dubbed version with synced lip movement in the target language, preserving the original vocal tone and pacing. The creative is the same. The language changes. You're testing a new market in hours, not weeks.

The implication for scaling: a winning creative is no longer locked to one language market. Every strong performer in your library is now a starting point for geographic expansion — without proportional increases in production cost or timeline.

The lipsync tool also handles talking avatar creation, which is useful for founder-style creatives, explainer formats, or any video where you want a speaking character but don't want to be on camera. Pair it with Soul ID, and you can build a recognizable AI presenter that appears consistently across your creative library.

Why one platform matters more than you'd think

The standard creative workflow has a hidden cost that nobody talks about: tool-switching. Your editor generates a video in one tool, downloads it, imports it into another to add motion, exports that, brings it into the editing suite, and exports again. Every transfer has latency. Every format mismatch is a debug session. Every new tool login is a context switch.

Higgsfield isn't perfect at everything — no AI platform is, and any honest review will tell you that. Sustained realistic motion with human faces still has limits. Some of the VFX presets are more social content than direct response. The output quality varies by model, and you'll need to test which models fit which use cases in your workflow.

But the consolidation point is real. When your editor can handle concept generation, motion control, character consistency, product placement, dubbing, and lipsync inside one interface — without re-exporting between tools — they move faster. And in a creative testing environment where speed of iteration is directly tied to CPA performance, that's not a minor benefit.

The brands winning on YouTube right now aren't the ones with the biggest production budgets. They're the ones shipping the most structured tests, the fastest. If your current creative workflow is the bottleneck between "hook idea" and "live in the account," that's where to look first.

If creative velocity is the bottleneck, let's look at the system!

We can walk through your current production workflow and show you exactly where the slowdowns are costing you scale. No slides. Just an honest look at what's getting in the way and what we'd change first.

| Miro Matviichuk, Head of Production Leading creative production at scale, turning strategy into results-driven execution. |

🎯 Inceptly’s top picks:

Essential reading you can't afford to skip

Before the first $1M in ad spend, it’s easy to think success comes from finding the right ad, moving fast, and watching the numbers closely.

This piece breaks down the harder truth: great media buying has less to do with hacks inside the ad account and more to do with building a system, staying calm under pressure, knowing when the real issue is the offer, and learning how to think clearly when the data gets messy.

If you spend media dollars, this is the kind of article that can save you from some very expensive lessons.

Most ads for sensitive products either feel awkward, overhyped, or instantly untrustworthy. This one takes a different route. By using a couple’s dynamic, natural conversation, and just enough specificity to build belief, it turns a taboo topic into a testimonial that feels surprisingly believable.

In the full post, we break down exactly how the ad works through Inceptly’s creative taxonomy and why this structure is so effective for selling products people do not always feel comfortable talking about.

Get in touch with us by responding to this email or tagging us on LinkedIn or Instagram, and sharing your thoughts. Your feedback helps us keep our newsletter relevant and interesting.